Data Staging Configuration & Monitoring

Tools

Figma, FigJam, Visual Studio Code & UserTesting

Team

Spotfire Data Virtualization Team

(formerly TDV - Tibco Data Virtualization)

Role

Senior UX/UI Designer

Duration

2025 - 2026 Project

Project Overview

Spotfire Data Virtualization allows organizations to query data across multiple systems without moving the data into a single database. When queries repeatedly access external sources such as enterprise databases, cloud warehouses, and APIs, performance can slow down.

To improve performance, the team introduced data staging as a solution, allowing frequently used datasets to be stored inside the system.

As part of this initiative, I designed the staging configuration workflow and the staging monitoring dashboard, enabling users to configure staging settings and monitor staging activity.

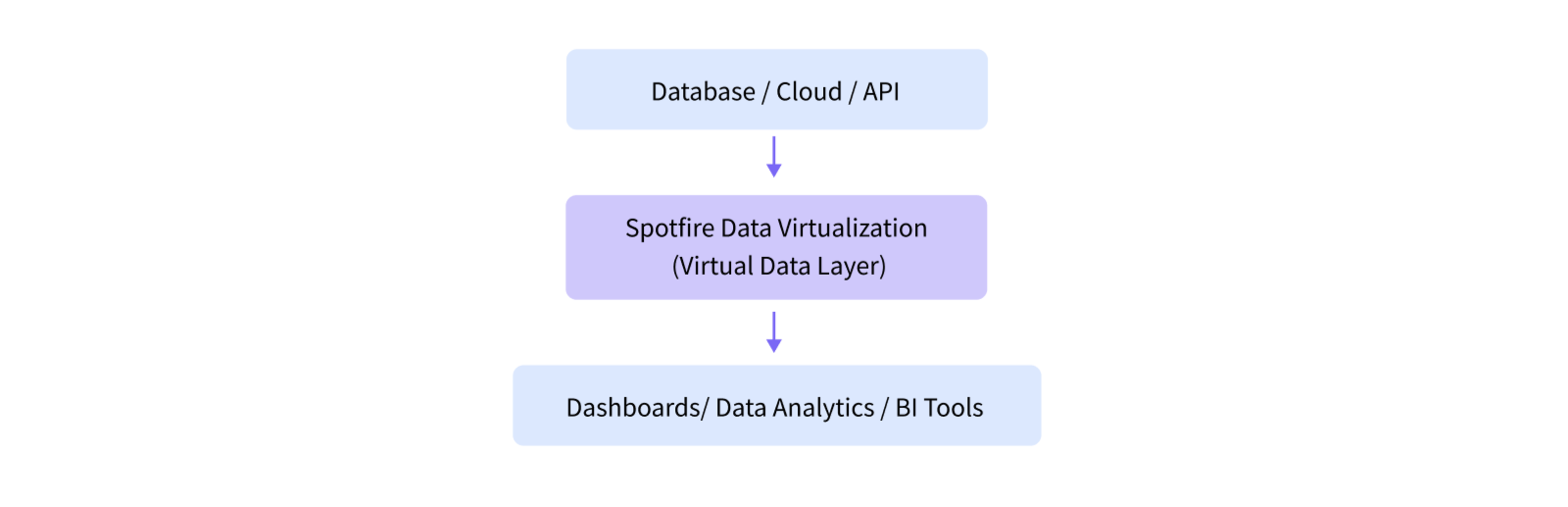

Understanding Data Virtualization

Data virtualization is a technology that allows users to access and analyze data from multiple systems without physically moving the data into a single database.

Instead of copying data into a warehouse, a virtualization platform creates a unified virtual layer that connects to many sources, such as databases, cloud platforms, and APIs — and allows users to query them as if they were one system.

This approach helps organizations combine data from different systems quickly while avoiding the complexity of building large data pipelines.

Why are Businesses Choosing Data Virtualization?

Industry research shows that around 60% of organizations have adopted data virtualization as part of their data integration strategy to simplify access to data across multiple systems.

At the same time, the global data virtualization market continues to grow rapidly as companies manage increasing amounts of distributed data across cloud platforms, databases, and applications.

Unified data access: Query multiple systems as one dataset

Faster analytics: No need to move or duplicate large datasets

Less complex pipelines: Reduce ETL processes and data engineering work

Real-time data: Access the most up-to-date information directly from source systems

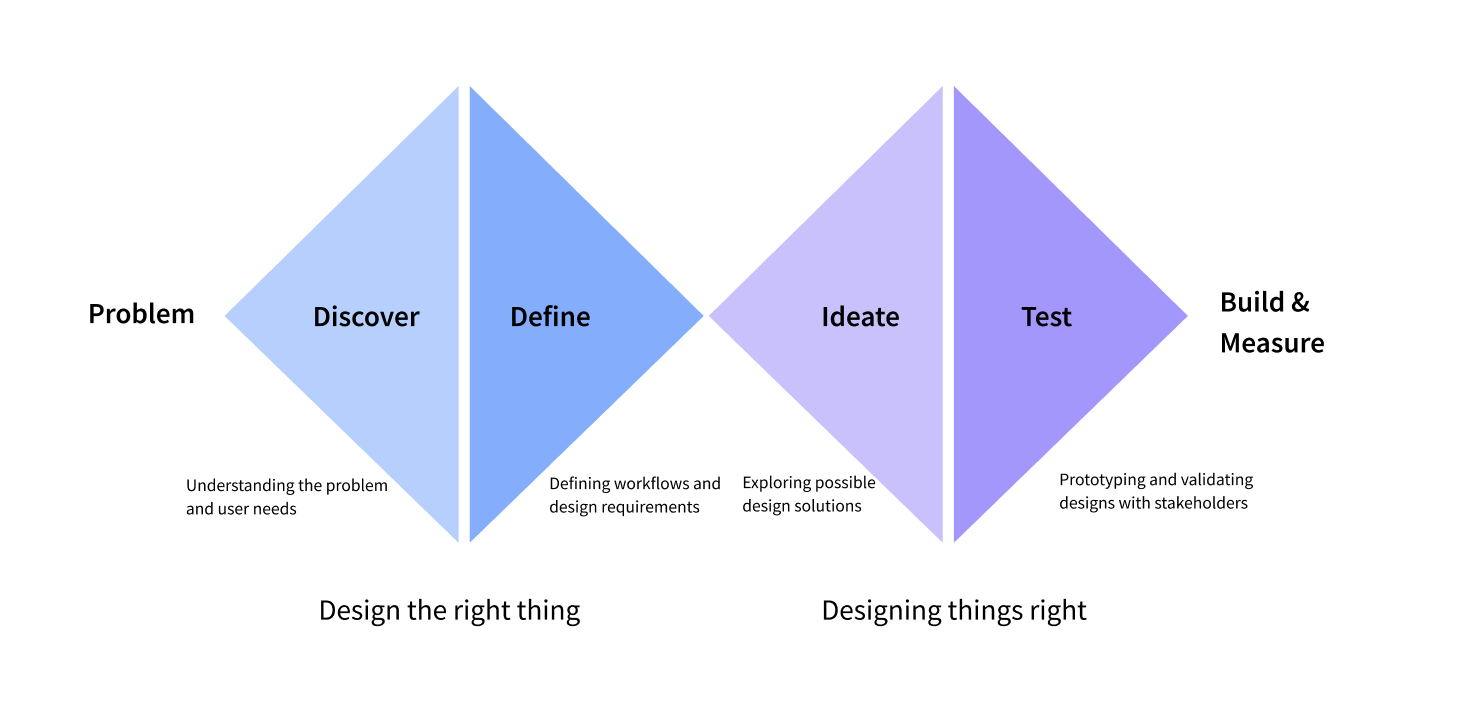

Design Process

Problem

Users reported that complex queries across multiple data sources often took a long time to process. To improve performance, the team introduced data staging, which stores frequently accessed datasets so queries can run faster and reduce load on source systems.

However, staging requires technical configuration typically handled by database specialists. The challenge was to design a workflow that allows users with limited technical knowledge to configure and monitor staging operations.

Outcomes

Understanding the problem and defining requirements

Worked with the team to review user feedback about slow query performance and explore staging as a solution. Collected requirements, discussed how the backend staging process would work, and defined what information should appear in the user interface.

Defining the staging workflow

Defined the structure of the staging configuration workflow in Workbench and identified the information needed to monitor staging activity, including staging states and system status.

Designing the staging configuration experience

Designed the staging configuration interface and organized staging settings into a clear, guided workflow.

Designing the staging monitoring dashboard

Designed the staging monitoring dashboard to provide visibility into staging activity, system status, and failed staging processes.

Impact

The new staging workflow made it possible for analysts to configure staging without needing deep database expertise.

The monitoring dashboard provides visibility into staging operations running in the background, allowing administrators to quickly detect failures and investigate staging jobs.

Overall, the solution makes staging operations simpler to configure and easier to monitor within the platform.

Project Details

PDF with full project details - VIEW FULL CASE STUDY

Feature release website -Spotfire Data Virtualization 8.9 Release (UX/UI design and written content created by me).